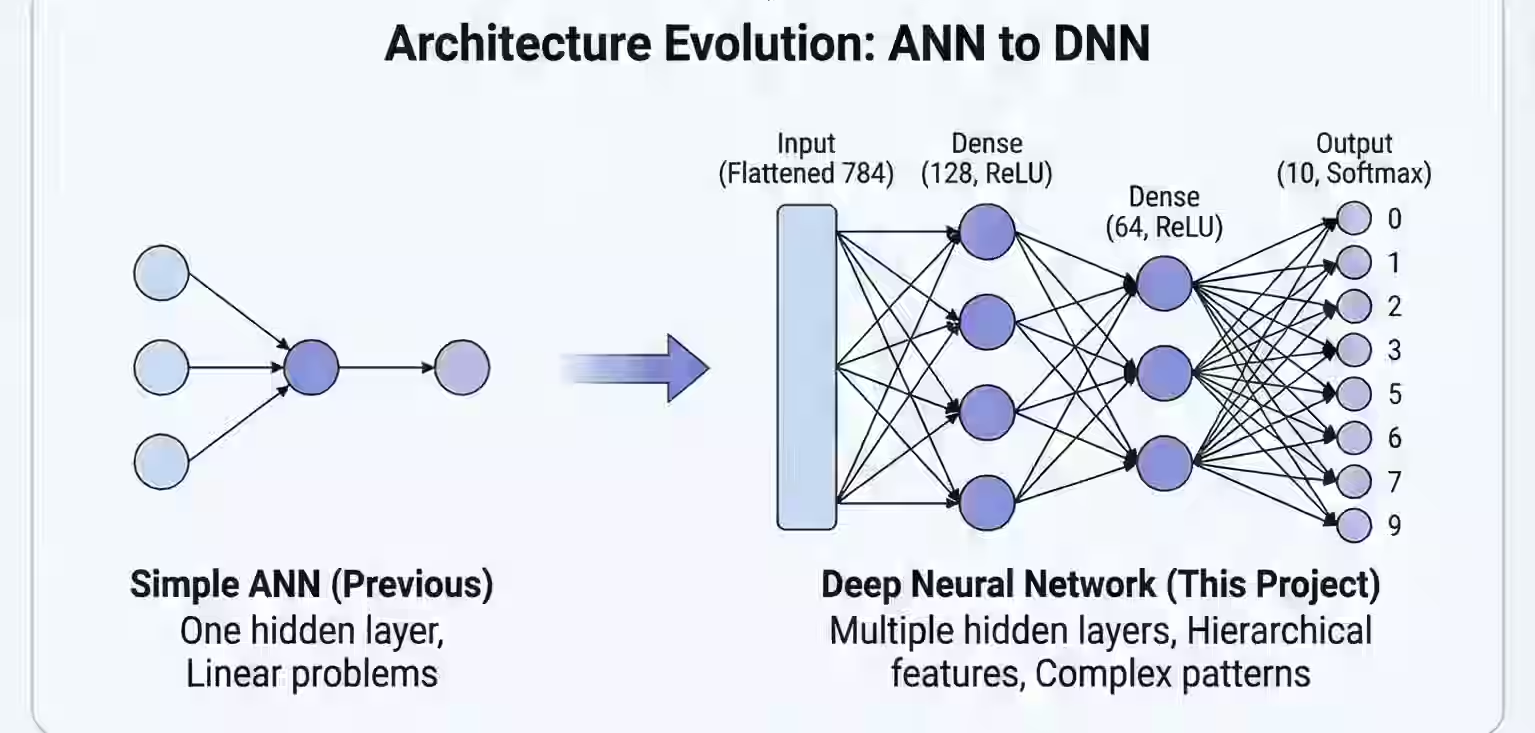

After building my first ANN to predict exam scores, I was ready for the next challenge: Deep Neural Networks. This time, instead of predicting one number, the network would recognize handwritten digits (0-9) from images!

🎯 What Makes This Different from ANN?

| ANN (Previous Project) | DNN (This Project) |

|---|---|

| Input: 1 number (hours) | Input: 784 numbers (28×28 image) |

| Output: 1 number (score) | Output: 10 classes (digits 0-9) |

| 1 layer | Multiple hidden layers |

| Linear problem | Non-linear, complex patterns |

Key insight: When problems get complex, we need depth — multiple layers that learn hierarchical features.

🖼 What is MNIST?

MNIST is the “Hello World” of machine learning — a dataset of 70,000 handwritten digit images:

- Image size: 28 × 28 pixels

- Color: Grayscale (0-255)

- Labels: Digits 0 through 9

- Training set: 60,000 images

- Test set: 10,000 images

🧠 The DNN Architecture

Here’s the model that achieves 97%+ accuracy:

model = tf.keras.Sequential([

tf.keras.layers.Flatten(),

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dense(64, activation='relu'),

tf.keras.layers.Dense(10, activation='softmax')

])Let me break down each layer:

🔹 Layer 1: Flatten()

What it does: Converts the 2D image into a 1D vector.

Before: 28 × 28 image (matrix)

After: 784 numbers → [x1, x2, x3, ... x784]Why needed? Dense layers expect a flat vector, not a 2D grid.

🔹 Layer 2: Dense(128, activation=‘relu’)

What it does:

- 128 neurons, each connected to ALL 784 input pixels

- Learns simple patterns (edges, curves)

ReLU activation:

output = max(0, x)Why ReLU? Adds non-linearity. Without it, stacking layers would just be linear math — no real “depth.”

🔹 Layer 3: Dense(64, activation=‘relu’)

What it does:

- 64 neurons receiving input from the 128 neurons above

- Combines simple features into complex patterns

- Learns digit-specific shapes

This is hierarchical learning — building complex understanding from simple parts!

🔹 Layer 4: Dense(10, activation=‘softmax’)

What it does:

- 10 neurons — one for each digit (0-9)

- Outputs probabilities that sum to 1.0

Example output:

[0.01, 0.02, 0.90, 0.01, 0.01, 0.02, 0.01, 0.01, 0.01, 0.00]

↑

Digit 2 has 90% probability → Prediction: 2📊 Complete Training Code

import tensorflow as tf

# 1. Load MNIST dataset

(x_train, y_train), (x_test, y_test) = tf.keras.datasets.mnist.load_data()

# 2. Normalize pixel values (VERY IMPORTANT!)

x_train = x_train / 255.0

x_test = x_test / 255.0

# 3. Build the DNN model

model = tf.keras.Sequential([

tf.keras.layers.Flatten(input_shape=(28, 28)),

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dense(64, activation='relu'),

tf.keras.layers.Dense(10, activation='softmax')

])

# 4. Compile model

model.compile(

optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['accuracy']

)

# 5. Train

model.fit(x_train, y_train, epochs=5, validation_split=0.1)

# 6. Evaluate

model.evaluate(x_test, y_test)🤔 Questions I Asked (And Finally Understood)

Q: Why normalize pixels by dividing by 255?

Answer: Raw pixel values (0-255) are too large and vary too much. Normalizing to 0-1:

- Makes training more stable

- Helps gradients flow better

- Speeds up convergence

x_train = x_train / 255.0 # Now values are 0.0 to 1.0Q: Why use Adam instead of SGD?

Answer: Adam is “smarter” than basic SGD:

- Adaptive Moment Estimation

- Automatically adjusts learning rate per parameter

- Works well out-of-the-box

For most deep learning, Adam is the default choice!

Q: What is sparse_categorical_crossentropy?

Answer: It’s the loss function for multi-class classification with integer labels.

- Sparse = labels are integers (0, 1, 2, … 9)

- Categorical = multiple classes

- Crossentropy = measures how wrong the probability distribution is

If labels were one-hot encoded, we’d use categorical_crossentropy instead.

Q: What’s the difference between ANN and DNN?

Answer: DNN = ANN with multiple hidden layers.

| Feature | ANN | DNN |

|---|---|---|

| Hidden layers | 0-1 | 2+ ✅ |

| Neurons | Few | Many ✅ |

| Learning | Simple patterns | Complex, hierarchical ✅ |

| Use case | Linear problems | Images, text, complex data |

Key insight: All DNNs are ANNs, but not all ANNs are “deep.”

🧠 What Each Layer Learns (Hierarchical Features)

| Layer | What It Learns |

|---|---|

| Flatten | Raw pixels |

| Dense 128 | Edges, simple curves |

| Dense 64 | Digit parts (loops, lines) |

| Dense 10 | Final digit classification |

This is why deep learning works — it builds understanding layer by layer!

⚠️ Overfitting: The DNN Trap

Problem: If you add too many layers/neurons:

- Training accuracy goes UP ↑

- Test accuracy goes DOWN ↓

The model memorizes training data instead of learning patterns!

Solutions:

- Dropout: Randomly disable neurons during training

- Regularization: Penalize large weights

- Early stopping: Stop training when validation accuracy plateaus

- Less depth: Sometimes simpler is better

📈 Results

After just 5 epochs:

- Training accuracy: ~98%

- Validation accuracy: ~97%

- Test accuracy: ~97-98%

The DNN correctly classifies handwritten digits with near-human accuracy! 🎉

🚀 What I Learned (Key Takeaways)

✅ Depth matters — multiple layers learn complex patterns

✅ Flatten converts images to vectors for Dense layers

✅ ReLU adds non-linearity (essential for learning)

✅ Softmax outputs probabilities for multi-class problems

✅ Normalization is crucial for stable training

✅ Adam optimizer works better than basic SGD

✅ Overfitting is the enemy — regularize!

📁 Try It Yourself

I’ve open-sourced this project with full code and explanations:

🔗 GitHub: DNN Handwritten Digit Classification

🎯 What’s Next?

This DNN works great for simple images, but for complex images (cats, dogs, faces), we need something better: Convolutional Neural Networks (CNNs).

Stay tuned for my next project where I build a CNN to classify cats vs dogs! 🐱🐶

Questions about DNNs? Feel free to reach out — explaining concepts helps me learn too! 🧠✨